Generation

of a Theory of Generative Design

With

the Help of Game Theory

Asli Serbest

(dipl.Ing)

Mona Mahall (M.A.)

m.mahall@igma.uni-stuttgart.de

Institut für Grundlagen Moderner Architektur und Entwerfen (IGMA)

Fakultät für

Architektur, Universität Stuttgart, Stuttgart, Germany

Abstract

Generative Design is anchored within automata theory,

minimal art and arte programmata. The text tries to accomplish different

approaches, all of them exerting a strong theory of communication to art and

design. To model generative design as a communicative game situation, it draws

a bow from John von Neumann to Marcel Duchamp, Donald Judd, GRAV and back.

1. To Start with Ramon Llull

To link generative art, or more

generally: generative design to some kind of programming seems to be certain.

Even more, if we follow John Warnock, programmer and co-founder of Adobe

Systems, who compared the coding of software to the acting out of dreams; for

us a quite optimistic but after all a short, sweet, and heroic definition of

design. Warnock, as a designer of application software especially for graphics

use, might be the one to know the things about coding.

We could be the ones to engage in a

theory of generative design, which actually could be described as the post-modern

version of Warnock’s notion on design: In separation to the modern and

definitely heroic artist, who all alone came to a sovereign and subjective

decision and who all alone created a totally new artwork by acting in

renunciation and negation to the tradition, indeed by acting out his dreams; in

separation to this classic modern position, generative art theorizing is being

determined by a post-modern or post-revolutionary attitude. As such it takes

late consequences of structuralist and cybernetic theories, as it focuses on

the art system, its elements, its relations and its functions – at the cost of

the artistic subject: Once being the creative center, modernly driven by the

subconscious, or by political and social missions, it now is degraded and

certainly liberated to a position at the corner. Or, following the notions of

generative art arguing, to the very ground, where only rules are to be

instituted. These basic rules or algorithms mark the limits of a then starting

autonomous generative process, out of which results a somehow unpredictable

artwork. Composed or constructed in such a manner through the use of systems

defined by computer software algorithms, or similar mathematical or mechanical

randomized autonomous processes, the artwork becomes a co-production of the

artist and an operating system; the latter is complex to a degree that

behavioral predictions are difficult or even impossible. Thus the artist

programs an artwork, in accordance to the Greek etymology of ‘to program’,

which encloses ‘before’ and ‘to write’. The artist codes a set of rules, which

then starts an open-ended process, which starts the development of a

self-contained artwork.

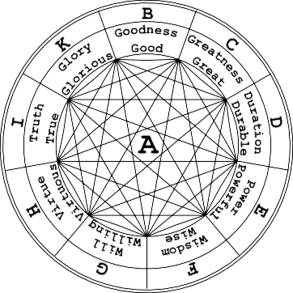

The method of generative design has

been traced back to Ramon Llull, 13th century Catalan philosopher, logician and

theologian, who became famous through his teaching of the so-called ars magna.

We could call the mechanism invented by Llull, some sort of hardware program:

He designed a mechanical apparatus for the combinatory generation of true sentences.

Therefore the fundamental terms and principles of all cosmological knowledge

were ordered around the edge of a circular disc. A second smaller disc,

repeating the order of the first and adding some terms, was mounted upon the

bigger one. Through simple rotation of these wheels, new positions and

combinations were being produced automatically, new truths as Llull called

them. Coming up to a total of 14 wheels, Llull could generate any complex

notional combination as the logical fundament of arts as well as sciences. In

fact it was brilliant Llull who could, in this way, read off successively all

potential attributes of God – from a determined (!) set of possibilities. The

interesting because formal aspect of Llull’s wheel transforming logics to an ars

inveniendi, consists in the mechanism, which combined the elements without

considering any content.

Figure 1: The first combinatory figure (Ramon Llull)

2. To continue with John von Neumann

In concerns of our theorizing, it

seems adequate to forward in history until programming was specified to

software programming for electronic devices.

Although not using the term

‘programming’ it was the mathematician John von Neumann who developed the idea

of software programming, as it is still implemented in the most computers of

today. Therefore he introduced the so-called von-Neumann-architecture, a stored

program concept of a system architecture, in which programs as well as data

were located in the same accessible storage.

His influential texts from 1947 to

his death in 1957 build up a context of programming, in relation to

mathematics, engineering, neurophysiology and genetics, and they could also

give some insight in what could be meant with generative design. Von Neumann

noted in the definitions of a ‘very high speed automatic digital computing

system’:

«Once these instructions are given

to the device, it must be able to carry them out completely without any need

for further intelligent human intervention. At the end of the required

operations the device must record the results again in one of the forms

referred to above. The results are numerical data; they are a specified part of

the numerical material produced by the device in the process of carrying out

the instructions referred to above« [1].

According to the main points of the

von Neumann machine, the computer has to perform a cycle of events: firstly it

fetches an instruction and the required data from the memory, then it executes

the instructions upon the data and stores the results in the memory; to loop

this cycle it goes back to the start.

As programming is about the

manipulation of symbols, it is bound to a medium, be that 0 and 1, the Morse

code or any other alphabet, which defines a set of possibilities out of which a

selection can be made. This selection has the formal constitution of an

instruction or an algorithm, which, from von Neumann on, means the

operativeness of all formalizable ideas.

But the machine must be told in

advance, and in great detail, the exact series of steps required to perform the

algorithm. The series of steps is the computer program.

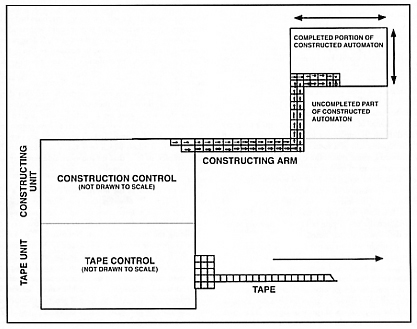

Figure 2: Universal construction in the cellular automata model of

machine replication

(John

von Neumann)

Illustrating the concept of his

machine, von Neumann clamped an analogy to the nervous system: Thus neurons of

the higher animals are definitely elements, in the sense of those elements

needed for a digital computing device. Such elements have to react on a certain

kind of input, called stimulus, in an all-or-none-way, that is in one of two

states: quiescent or exited. In the draft of 1945 von Neumann actually designed

a device composed of specialized organs, which was able to operate on the

arithmetical tasks of addition, subtraction, multiplication and division. He

mentioned a central control organ for the logical control of the device, a

memory organ carrying out long and complicated sequences of operations and

transfer organs [2].

Actually in biologizing the digital

device, or the other way round: in computerizing the nervous system, von

Neumann consequently approached the revolutionary question of a living organism

being comparable to an artificial machine. In 1948 he gave a lecture titled

“The General and Logical Theory of Automata“ on the occasion of the “Hixon

Symposium on Cerebral Mechanisms of Behaviour.“ He introduced his design of a

universal constructor, a self-replicating machine in a cellular automata

environment. The machine was defined as using 29 states, which should provide

signal transfer and logical operations and which should act upon signals as bit

streams. The most interesting feature of this concept was the ‘tape’, actually

the description of the universal constructor, or in other words: the encoding

of the sequence of actions to be performed by the machine. In the eyes of the

machine, it must have been a kind of self-specification and auto-instruction.

Von Neumann’s cellular automata have

been regarded to modularize an environment appropriate to demonstrate the

logical requirements for machine self-replication. Theoretical Biology and

Artificial Life identified von Neumann’s automaton, and the separation of a

constructor from its own description to be useful as a treatment for open-ended

evolution.

And it is the conceptual separation

of a constructor from its own description, which takes us back to design

theory, as it represents, or even more: as it formalizes operations of

symbolization, of abstraction and conceptualization, which characterize the

modern culture.

3. Minimal Art and Algorithms

Who else than Marcel Duchamp could

be analogically thought of in concerns of revolutionizing the art system by

separating the concept, that means the description of the artwork from the

artwork, and who else could be regarded the paragon of fields like Conceptual

Art, Fluxus and Minimal Art? It is not the place here to describe in detail the

complex strategies of Marcel Duchamp, but only to emphasize the common aspect

of his work:

Duchamp rejected the ‘retinal art’

as being stagy, and declared the concept, the idea, the description or

instruction of an artwork to be preferred to the artwork itself. He

demonstrated the mechanisms and algorithms of art as art, and it seems to us,

that, in this way, he executed the analogous separation of the constructor (the

realization of an artwork) from its description (concept of the artwork)

formalized by John von Neumann.

But it was Marcel Duchamp who had

thirty years in advance, and who even radicalized the concept by introducing

the readymade strategy, by replacing the artwork with the pure declaration of

an artwork. There is even no concept or description left, but only the

appellation. In 1917 he bought a urinal at J. L. Mott Iron Works’, called it

‚Fountain’, designated it with ‚Richard Mutt’ and submitted it for the annual

exhibition of the Society of Independent Artists in New York.

The philosopher and art theorist

Boris Groys [3] identifies Marcel Duchamp to having introduced the binary code

of 0 and 1 to the art system by his readymade strategy. His single sovereign

and subjective decision declares a profane object an artwork, just like yes or

no, art or no art.

One might think it would not take

long till this decision was set in the context of a formal logic or semiotic

system, in the context of an artificial language. But it lasted to the sixties

for the next step to do; the next step to art as a systemic and code guided, as

a generative practice. The reasons, Groys explains, are multifarious: artists

and theorists of the post-avant-garde surrealism declared the erotic desire to

be the engine of the artistic decision. At the same time these decisions were

politicized. Not until the postwar-era, not until the first higher programming

languages were developed, it was possible to describe the individual artistic

decision in terms of formal, logical, and semiotic systems.

Groys states: »The lonesome,

irreducible and undisputable decision of the autonomous artistic subjectivity

was replaced by an explicit, traceable, rule guided, algorithmic operation,

which could be read off in the artwork.« [4]

In this way arts and the computer

revolution got together in the sixties when the first electronic devices became

widely accepted and the minimalist art of Donald Judd finally challenged the

avant-garde attitude of negation. Minimal Art took another line: the variation.

Donald Judd presented an artwork as a series of binary decisions, as result of

an algorithmic loop transforming the same object to a row of variants. Actually

it was not the object, which was meant by Judd, but the code of transformation

itself. It was the in-between, the transition from one to another object,

moving to the center of minimal artistic interest.

It is von Neumann’s separation of

the constructor from the instruction clarified in a virtually endless

iteration. It is the art of a computer program without a computer.

Boris Groys calls Donald Judd’s

minimal art generative, in that it is able to endlessly produce variants, and –

that is the main point – in that its code is somehow observable for the viewer.

Thus it is the viewer who theoretically becomes able to imagine by himself all

possible not yet realized but evoked variations of the code. In this way the

aspect of generativeness is not limited to the production process but it is

opened up to the process of reception, or better: to some kind of prosecution.

In the notation of the sixties and seventies, one might as well take this as a

form of participation. And to

participate in an installation of Donald Judd, is to surround the objects, to anticipate

the algorithm and to think of all variants still to be realized for this open

art project.

Actually art being conceived as a

‘project’ implies, besides participation, re-technization, which means the

history of computer art from this point on.

4. Arte Programmata and Participation

We have to further engage in the

aspect of participation, focusing on actual generative art not just being a

method of making art, but as well an invitation of being proceeded or

prosecuted by the viewer.

Therefore we would like to turn to

another field of sixties art, which emphasized the aspect of participation, and

which indicated their methods as programming.

Coming from kinetic art and from

cybernetics, the French ‘Groupe de recherche d’art visuel’ (GRAV), founded in

1960, worked on game theory, on information theory, and combinatory analyses,

in order to reform their artistic strategies. Vera Molnar, co-founder and

member of GRAV, explored heuristic methods and problem-solving techniques for

her artistic interventions. She is stated to work in a series of small probing

steps, analog to our description of software programming above. After the

evaluation of one step, Molnar went on in varying only one parameter for the

next step, and so developed an artwork step by step. Stripping the content away

from the visual image, she focused on seeing and perceiving.

In thus adopting scientific research

techniques, and in quitting intuition for rationalism, GRAV changed their

self-conception as artists, and changed what is called work organization.

Cooperation and the presentation as a group replaced the solo work and the

single designation. Interesting to us, they opened up the object-oriented works

to create situations including the viewer in some sort of event or happening.

It was about to create game situations and game arrangements, so that the

viewer or player could take part at works like ‘labyrinths’ (1963) or ‘Une

journée dans la rue’ (1966). It was about the search of possibilities to

involve the viewer in a spatial composition that we could call the playground.

Thus the main theme for GRAV was to arrange game situations.

It seems to us a Lyotard comment

before Lyotard, when Francois Morellet, co-founder and member of GRAV declared:

»Art for me only exhibits a social

function when it is demystified, when it gives the possibility to the viewer,

to actively participate, to strip the mechanisms, to discover the rules and

finally to perform« [5].

5. Generative Design and Gaming

Actually it was Lyotard who

reintroduced the topic of game, more precisely: the topic of Wittgenstein’s

‘language games’ to describe communication beyond the ‘grand narratives’ of the

modern era. He states the aspects of play to be central to forms of pragmatic

communication like questioning, promising, literary describing or narrating:

There is no game without rules. There has to be a set of rules determining the

use of the different communicative statements. In doing so, the rules have to

be agreed on by the players, who should contribute all statements as moves, and

who should not give statements hitting the level of rules.

If we look back to where we began,

we realize that it is the achievement of John von Neumann and Marcel Duchamp to

emphasize and to formalize (within mathematics and within arts) the separation

of the rule (description) from the move (object). In this way they both

introduced the game to their fields.

In concerns of playing, Lyotard

continues with the reflection on two kinds of innovation – an important aspect

to design –. Innovation thus can be achieved through introducing new statements

that is through new moves during the game, and through the modification of the

rules of game. The latter results in the constitution of a new and different

game at all.

We would like to describe generative

design being ‘organized’ [6] as communication, which is comparable to a game

situation – beyond the political implications of Lyotard’s postmodern

condition.

It is not about to realize every

artwork as a game; it is about to introduce a playful openness on both sides of

a work, on the side of production and on the side of reception or prosecution.

We recognize this feature as characteristic to all advanced generative design

methods.

5.1 Strategy

To loop our argumentation, we

restart with John von Neumann, this time in concerns of game theory. In

cooperation with Oscar Morgenstern he introduced to the social sciences a

revolutionary approach, which should help to explain the complex phenomena of

economic, political and social life. The methods appropriate to modularize

observations made in the physical world obviously did not fit to concerns of

human behavior. Social phenomena are determined by human acting with each other

or against each other, by dreams, hopes, fears and not at least by different

knowledge. Von Neumann and Morgenstern could prove the adequacy of the

strategic game to describe social phenomena. Therefore they developed a

mathematical formalism, which integrated both, the human and the machine, in

search of the best moves to win the game.

Important to design theory seems the

possibility within game theory to depersonalize the process of decision.

Through the invention of a mechanism setting the rules, framing the playground

and the playtime, design could change from inventing to playing. Virtually

complete strategies can be tested and evaluated within tactic that means actual

decisions and their consequences. Roles can be accepted, or abandoned, or

consequently changed. The distance established in the organization of roles, is

the distance of the actor from its actions, which means the space, needed for

the self-reflection of a player. Within playing it is possible to give all

variants of a system a try, and then to choose the favorite, never mind if it

is von Neumann’s minimax or maximin or neither. Interaction, imitation,

cooperation, bluff, risk, and challenge, anything goes, as long as rules are

being observed.

In fact, playing is about variety,

as advanced games, being played (!), contain developments, which are open-ended

and unpredictable. Games actually exhibit emergence, to use the term in the

sense of John Holland. So they make available whole contexts, they widen up the

field to the borders, and they are able to produce novelty.

Playing is the refuge of a

Buckminster Fuller kind of idealism, which, during the sixties, resulted in the

World Game, posing the question:

»How do we make the world work for

100% of humanity in the shortest possible time through spontaneous cooperation

without ecological damage or disadvantage to anyone?« [7]

John von Neumann could have proved

the impossibility of this aim within a few pages of calculation.

For generative design, we assume

however, playing within rules, might be an open and productive strategy, which

could take advantage of chance, and furthermore which could be fun.

5.2 Chance

There is a long tradition of

exploiting random processes and chance for design tasks, from Ramon Llull’s

combinatorics, we described at the beginning, to Mozart’s ‘Würfelmusik’ and

Dada. But now it is the computer to simply simulate all forms of chance, be

that mutations in evolutionary processes, stochastic calculations or simple

number generators.

Stanislaw Lem has developed a whole

philosophy of chance stating the author to be a player in means of game theory,

who tests different variants of moves, who actually tests possible

transformations of the initial state of his work, while considering tactic

(micro structural) and strategic (macro structural) goals [8]. Thus the process

of creation is defined as a queer and multiple undetermined construction

process. In this complex process the system emerges as the growth of

organization and at the same time as the reduction of susceptibility to noise.

But on the other hand it is this random factor as generator of variety, which

allows development.

Not until there is found

equilibrium, the artwork is affected in an auto transformative motion.

It is not the place to further

engage in this fascinating theory, but one aspect should be added: Lem is sure

of the (literal) artwork being identical to the mental operations of its

reading. A truly cybernetic concept in accordance to Claude Shannon’ s

information and Max Bense’s aesthetic object, which seems extremely playful and

generative to us.

5.3 Pleasure

To complete our fast and

rough-textured thoughts we would like to mention the role of pleasure or

happiness within playing.

Ali Irtem, Turkish cyberneticist,

states:

»The amount of happiness could be

seen as a measure of the adaptability of a cybernetic system at a given moment,

corresponding to the degree of efficiency in a mechanical system.« [9]

His happiness machinery might be a

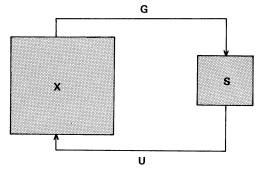

nice example of a playful and open system design:

»System X can be seen as a man who

can only be happy when the condition p (p may also be a set of desires) is

attained. This condition, however, cannot yet be realized in system X; we

therefore have to amplify X’s amount of happiness through an additional and

appropriate regulation device. In order to do this, we have to couple system X

with another system S – S could be a woman – through channels G and U so that

each affects the other.

The new system S is able to attain a

state of equilibrium or happiness if the condition q is attained (again q could

be a set of conditions). Suppose now linkage G will allow q to occur in S if,

and only if, the happiness condition p occurs in X; S’s power of veto,

according to Ashby, now ensures that any state of happiness of the whole must

imply the happiness condition p in X.« [9]

Figure 3: The happiness machinery for amplifying happiness (Ali Irtem)

Irtem admits some limitations:

»A man’s or woman’s capacity as a

regulator cannot exceed his or her capacity as a channel of communication.

Anyway, the amplification rate of happiness by coupling, including marriage,

seems to be limited. Probably for this reason team-work is recommended, though

polygamy and harems are not allowed in many countries. Nevertheless, machines

and human beings must come together, in order to increase their intelligence

and to amplify their happiness.« [10]

References

[1] John von Neumann: First Draft of

a Report on the EDVAC, Pennsylvania 1945, 1.2

[2] John von Neumann: First Draft of

a Report on the EDVAC, Pennsylvania 1945, 2.2-2.6, 7.6

[3] Boris Groys: Mimesis des Denkens, in: Munitionsfabrik

15, Staatliche Hochschule für Gestaltung Karlsruhe (ed.), Karlsruhe 2005

[4] Boris Groys: Mimesis des Denkens, in: Munitionsfabrik

15, Staatliche Hochschule für Gestaltung Karlsruhe (ed.), Karlsruhe 2005

»Die einsame, unreduzierbare, unverfügbare Entscheidung der

autonomen künstlerischen Subjektivität wurde durch einen expliziten,

nachvollziehbaren, regelgeleiteten, algorithmischen Vorgang ersetzt, der im aus

ihm resultierenden Kunstwerk ablesbar wurde.«, p. 62/63

[5] Barbara Büscher: Vom Auftauchen des Computers in der

Kunst, in: Kaleidoskopien 5, Ästhetik als Programm, Berlin 2004

»Kunst hat für mich dann eine soziale Funktion, wenn sie

entmystifiziert ist, wenn sie dem Betrachter die Möglichkeit gibt, tätig

beteiligt zu sein, die Mechanismen auseinander zu nehmen, die Regeln zu

entdecken und sie dann selber ins Spiel zu setzen«, p. 234

[6] A form of self-organization.

In his Introduction to Cybernetics,

Ross W. Ashby describes communication directly as organization.

Ross W. Ashby, An Introduction to

Cybernetics, London 1956

[7] Buckminster Fuller cited on

www.geni.org

[8] Stanislaw Lem: Die Philosophie des Zufalls (Polish

original 1975), Frankfurt/Main 1983, p. 78

[8], [9], [10] Ali Irtem: Happiness,

amplified cybernetically, in: Cybernetics, Art and Ideas, Jasia Reichardt

(ed.), Greenwich 1971, p. 72ff

Literature

Ross W. Ashby, An Introduction to

Cybernetics, London 1956

Gregory Bateson: Steps of an Ecology

of Mind, Chicago 1972

Wolfgang Ernst: Barocke Kombinatorik als Theorie-Maschine,

www.medienwissenschaft.hu-berlin.de 2003

Boris Groys: Über das Neue, Frankfurt/ Main 1999

John Holland: Games and Numbers, in:

Emergence, John Holland, Cambridge/Massachusetts 1998

W. Künzel/ Peter Bexte, Allwissen und Absturz. Der Ursprung

des Computers, Frankfurt/Main 1993

Stanislaw Lem: Die Philosophie des Zufalls (Polish original

1975), Frankfurt/Main 1983

John von Neumann/ Oscar Morgenstern:

Theory of Games and Economic Behavior (1953), Princeton 1970

Jasia Reichardt (ed.): Cybernetics,

Art and Ideas, Greenwich 1971